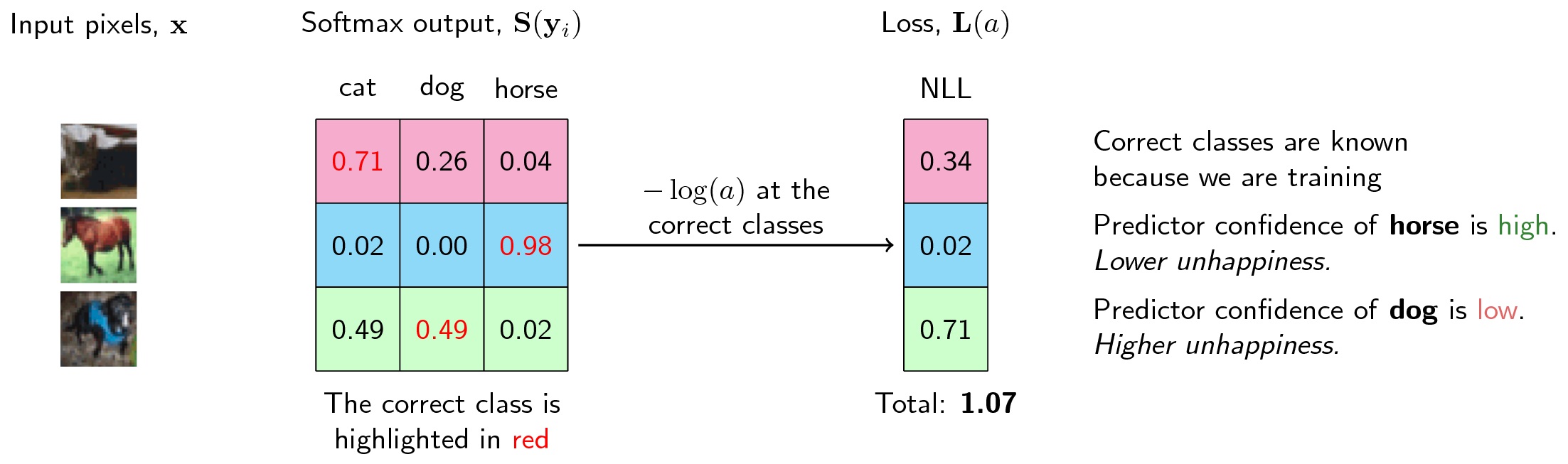

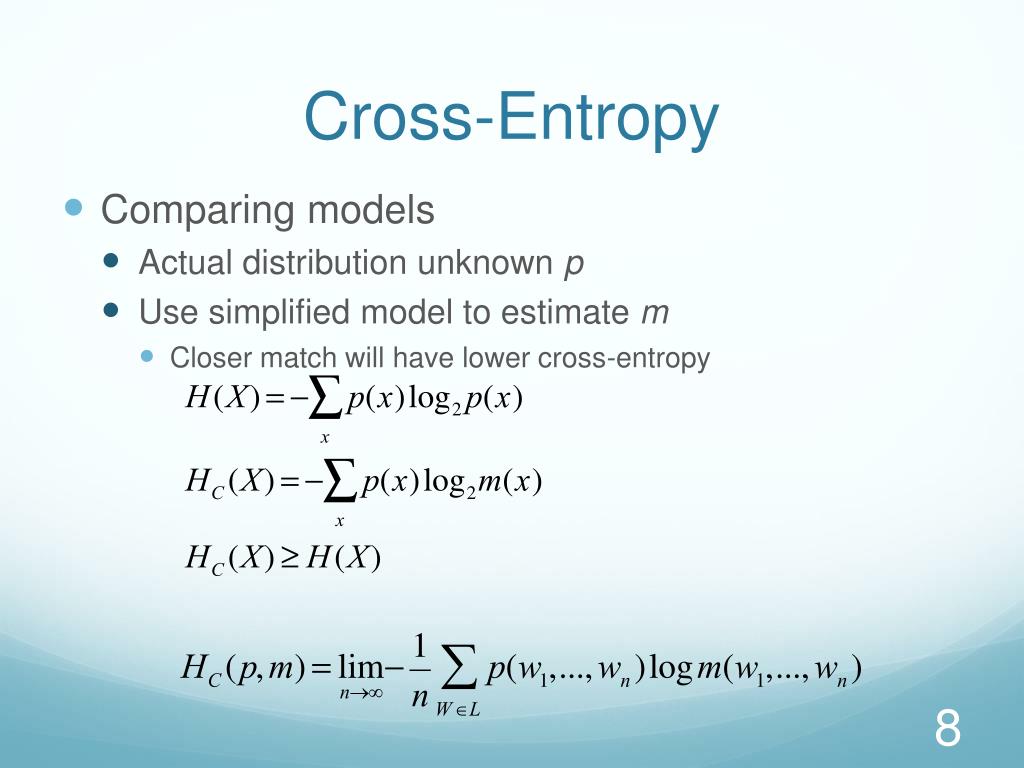

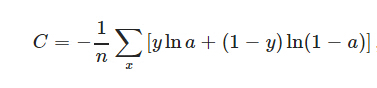

ML notation, multiple events, binary classes. Both notations, both the single and multiple event case, multiple classes. Information theory notation, single event case, multiple classes. If you read them make sure you think about (a) how they are notating the true distribution and the approximating distribution (b) whether they are writing about single events or multiple events (c) whether they are writing about binary classes or multi classes and (d) whether the \(y\)’s are indicators or labels. I don’t know if there is a single best place to learn about cross-entropy, but below are a few places that were helpful. The image below summarizes the many confusing differences between these formulas. In previous formulas, as you loop through classes you would set \(y_j\) to be 1 whenever the outcome was the class being considered otherwise you set it to 0. When it has an outcome of 0, \(y_i\) is 0. When the event has an outcome of 1, \(y_i\) is 1.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed